Double-precision floating-point format

Double-precision floating-point format is a computer number format that occupies 8 bytes (64 bits) in computer memory and represents a wide, dynamic range of values by using a floating point.

Double-precision floating-point format usually refers to binary64, as specified by the IEEE 754 standard, not to the 64-bit decimal format decimal64. In older computers, different floating-point formats of 8 bytes were used, e.g., GW-BASIC's double-precision data type was the 64-bit MBF floating-point format.

| Floating point precisions |

|---|

| IEEE 754 |

| Other |

IEEE 754 double-precision binary floating-point format: binary64

Double-precision binary floating-point is a commonly used format on PCs, due to its wider range over single-precision floating point, in spite of its performance and bandwidth cost. As with single-precision floating-point format, it lacks precision on integer numbers when compared with an integer format of the same size. It is commonly known simply as double. The IEEE 754 standard specifies a binary64 as having:

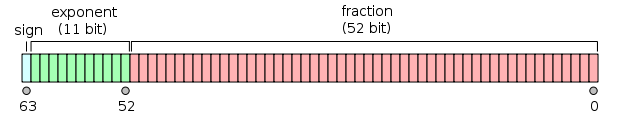

- Sign bit: 1 bit

- Exponent: 11 bits

- Significand precision: 53 bits (52 explicitly stored)

This gives 15–17 significant decimal digits precision. If a decimal string with at most 15 significant digits is converted to IEEE 754 double precision representation and then converted back to a string with the same number of significant digits, then the final string should match the original. If an IEEE 754 double precision is converted to a decimal string with at least 17 significant digits and then converted back to double, then the final number must match the original.[1]

The format is written with the significand having an implicit integer bit of value 1 (except for special data, see the exponent encoding below). With the 52 bits of the fraction significand appearing in the memory format, the total precision is therefore 53 bits (approximately 16 decimal digits, 53 log10(2) ≈ 15.955). The bits are laid out as follows:

The real value assumed by a given 64-bit double-precision datum with a given biased exponent and a 52-bit fraction is

or

Between 252=4,503,599,627,370,496 and 253=9,007,199,254,740,992 the representable numbers are exactly the integers. For the next range, from 253 to 254, everything is multiplied by 2, so the representable numbers are the even ones, etc. Conversely, for the previous range from 251 to 252, the spacing is 0.5, etc.

The spacing as a fraction of the numbers in the range from 2n to 2n+1 is 2n−52. The maximum relative rounding error when rounding a number to the nearest representable one (the machine epsilon) is therefore 2−53.

The 11 bit width of the exponent allows the representation of numbers between 10−308 and 10308, with full 15–17 decimal digits precision. By compromising precision, the subnormal representation allows even smaller values up to about 5 × 10−324.

Exponent encoding

The double-precision binary floating-point exponent is encoded using an offset-binary representation, with the zero offset being 1023; also known as exponent bias in the IEEE 754 standard. Examples of such representations would be:

- Emin (1) = −1022

- E (50) = −973

- Emax (2046) = 1023

Thus, as defined by the offset-binary representation, in order to get the true exponent, the exponent bias of 1023 has to be subtracted from the written exponent.

The exponents 00016 and 7ff16 have a special meaning:

-

00016is used to represent a signed zero (if F=0) and subnormals (if F≠0); and -

7ff16is used to represent ∞ (if F=0) and NaNs (if F≠0),

where F is the fractional part of the significand. All bit patterns are valid encoding.

Except for the above exceptions, the entire double-precision number is described by:

In the case of subnormals (E=0) the double-precision number is described by:

Endianness

Although the ubiquitous x86 processors of today use little-endian storage for all types of data (integer, floating point, BCD), there are a few historical machines where floating point numbers are represented in big-endian form while integers are represented in little-endian form.[2] There are old ARM processors that have half little-endian, half big-endian floating point representation for double-precision numbers: both 32-bit words are stored in little-endian like integer registers, but the most significant one first. Because there have been many floating point formats with no "network" standard representation for them, the XDR standard uses big-endian IEEE 754 as its representation. It may therefore appear strange that the widespread IEEE 754 floating point standard does not specify endianness.[3] Theoretically, this means that even standard IEEE floating point data written by one machine might not be readable by another. However, on modern standard computers (i.e., implementing IEEE 754), one may in practice safely assume that the endianness is the same for floating point numbers as for integers, making the conversion straightforward regardless of data type. (Small embedded systems using special floating point formats may be another matter however.)

Double-precision examples

3ff0 0000 0000 000016 = 1 3ff0 0000 0000 000116 ≈ 1.0000000000000002, the smallest number > 1 3ff0 0000 0000 000216 ≈ 1.0000000000000004 4000 0000 0000 000016 = 2 c000 0000 0000 000016 = –2

0000 0000 0000 000116 = 2−1022−52 = 2−1074

≈ 5 × 10−324 (Min subnormal positive double)

000f ffff ffff ffff16 = 2−1022 − 2−1022−52

≈ 2.2250738585072009 × 10−308 (Max subnormal double)

0010 0000 0000 000016 = 2−1022

≈ 2.2250738585072014 × 10−308 (Min normal positive double)

7fef ffff ffff ffff16 = (1 + (1 − 2−52)) × 21023

≈ 1.7976931348623157 × 10308 (Max Double)

0000 0000 0000 000016 = 0 8000 0000 0000 000016 = –0

7ff0 0000 0000 000016 = Infinity fff0 0000 0000 000016 = −Infinity 7fff ffff ffff ffff16 = NaN

3fd5 5555 5555 555516 ≈ 1/3

By default, 1/3 rounds down, instead of up like single precision, because of the odd number of bits in the significand.

In more detail:

Given the hexadecimal representation 3FD5 5555 5555 555516,

Sign = 0

Exponent = 3FD16 = 1021

Exponent Bias = 1023 (constant value; see above)

Fraction = 5 5555 5555 555516

Value = 2(Exponent − Exponent Bias) × 1.Fraction – Note that Fraction must not be converted to decimal here

= 2−2 × (15 5555 5555 555516 × 2−52)

= 2−54 × 15 5555 5555 555516

= 0.333333333333333314829616256247390992939472198486328125

≈ 1/3

Execution speed with double-precision arithmetic

Using double precision floating-point variables and mathematical functions (e.g., sin, cos, atan2, log, exp and sqrt) are slower than working with their single precision counterparts. One area of computing where this is a particular issue is for parallel code running on GPUs. For example, when using NVIDIA's CUDA platform, on video cards designed for gaming, calculations with double precision take 3 to 24 times longer to complete than calculations using single precision.[4]

Implementations

Doubles are implemented in many programming languages in different ways such as the following. On processors with only dynamic precision, such as x86 without SSE2 (or when SSE2 is not used, for compatibility purpose) and with extended precision used by default, software may have difficulties to fulfill some requirements.

Lua

In Lua version 5.2[5] and earlier, all arithmetic is done using double-precision floating-point arithmetic. Also, automatic type conversions between doubles and strings are provided.

JavaScript

As specified by the ECMAScript standard, all arithmetic in JavaScript shall be done using double-precision floating-point arithmetic.[6]

C and C++

C and C++ offer a wide variety of arithmetic types. Double precision is not required by the standards (except by the optional annex F of C99, covering IEEE 754 arithmetic), but on most systems, the double type corresponds to double precision. However, on 32-bit x86 with extended precision by default, some compilers may not conform to the C standard and/or the arithmetic may suffer from double-rounding issues.[7]

See also

- IEEE floating point, IEEE standard for floating-point arithmetic (IEEE 754)

Notes and references

- ↑ William Kahan (1 October 1997). "Lecture Notes on the Status of IEEE Standard 754 for Binary Floating-Point Arithmetic" (PDF).

- ↑ "Floating point formats".

- ↑ "pack – convert a list into a binary representation".

- ↑ http://www.tomshardware.com/reviews/geforce-gtx-titan-gk110-review,3438-3.html

- ↑ http://www.lua.org/manual/5.2/manual.html

- ↑ ECMA-262 ECMAScript Language Specification (PDF) (5th ed.). Ecma International. p. 29, §8.5 The Number Type.

- ↑ GCC Bug 323 - optimized code gives strange floating point results